The estat vif Command - Linear Regression Post-estimation

The estat vif command calculates the variance inflation factors (VIFs) for the independent variables in your model. The VIF is the ratio of variance in a model with multiple independent variables (MV), compared to a model with only one independent variable (OV) – MV/OV. It is used to test for multicollinearity, which is where two independent variables correlate to each other and can be used to reliably predict each other.

If there is multicollinearity between 2 or more independent variables in your model, it means those variables are not truly independent. An OLS linear regression examines the relationship between the dependent variable and each of the independent variables separately. The regression coefficient for an independent variable represents the average change in the dependent variable for each 1 unit change in the independent variable. This change assumes all other independent variables are kept constant. Multicollinearity interferes with this assumption, as there is now at least one other independent variable that is not remaining constant when it should be.

The most common cause of multicollinearity arises because you have included several independent variables that are ultimately measuring the same thing. For example, you have an independent variable for unemployment rate and another for the number of job applications made for entry-level positions. Both these variables are ultimately measuring the number of unemployed people, and will both go up or down accordingly. For this kind of multicollinearity you should decide which variable is best representing the relationships you are investigating. You can then remove the other similar variables from your model.

Another cause of multicollinearity is when two variables are proportionally related to each other. For example, you have an independent variable that measures a person’s height, and another that measures a person’s weight. These variables are proportionally related to each other, in that invariably a person with a higher weight is likely to be taller, compared with a person with a smaller weight who is likely to be shorter. In this case the variables are not simply different ways of measuring the same thing, so it is not always appropriate to just drop one of them from the model. What you may be able to do instead is convert these two variables into one variable that measures both at the same time. In the example above, a neat way of measuring a person’s height and weight in the same variable is to use their Body Mass Index (BMI) instead, as this is calculated off a person’s height and weight.

To interpret the variance inflation factors you need to decide on a tolerance, beyond which your VIFs indicate significant multicollinearity. Different statisticians and scientists have different ‘rules of thumb’ regarding when your VIFs indicate significant multicollinearity. The most common rule used says an individual VIF greater than 10, or an overall average VIF significantly greater than 1, is problematic and should be dealt with. However, some are more conservative and state that as long as your VIFs are less than 30 you should be ok, while others are far more strict and think anything more than a VIF of 5 is unacceptable. What tolerance you use will depend on the field you are in and how robust your regression needs to be. For the examples outlined below we will use the rule of a VIF greater than 10 or average VIF significantly greater than 1.

How to Use:

*Perform a linear regression, then

estat vif

OR for a regression with no constant

estat vif, uncentered

Worked Example 1:

In this example I use the auto dataset. I am going to generate a linear regression, and then use estat vif to generate the variance inflation factors for my independent variables.

In the command pane I type the following:

sysuse auto, clear

regress price mpg rep78 weight displacement

estat vif

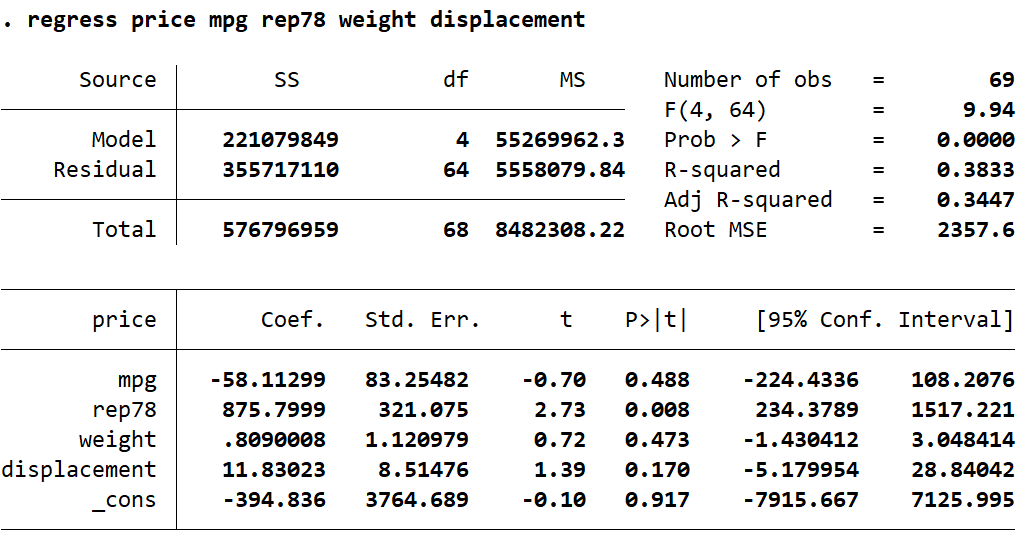

This gives the following output in Stata:

Here we can see the VIFs for each of my independent variables. While no VIF goes above 10, weight does come very close. Also, the mean VIF is greater than 1 by a reasonable amount. I am going to investigate a little further using the correlate command. In the command pane I type the following:

correlate weight displacement mpg rep78

This gives the following output in Stata:

From this I can see that weight and displacement are highly correlated (0.9316). This makes sense, since a heavier car is going to give a larger displacement value. In this case, weight and displacement are similar enough that they are really measuring the same thing. Therefore, there is multicollinearity because the displacement value is representative of the weight value. Because displacement is just another way of measuring the weight of the car, the variable isn’t adding anything to the model and can be safely removed. I will now re-run my regression with displacement removed to see how my VIFs are affected.

In the command pane I type the following:

regress price mpg rep78 weight

estat vif

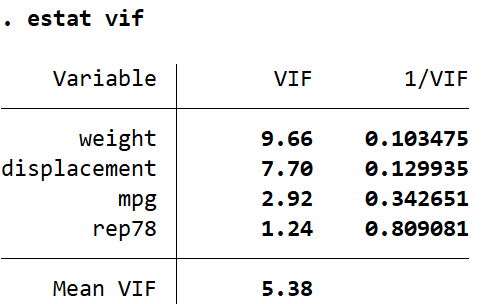

This gives the following output:

Here we can see by removing the source of multicollinearity in my model my VIFs are within the range of normal, with no rules violated.

Worked Example 2:

Let’s take a look at another regression with multicollinearity, this time with proportional variables. In the command pane I type the following:

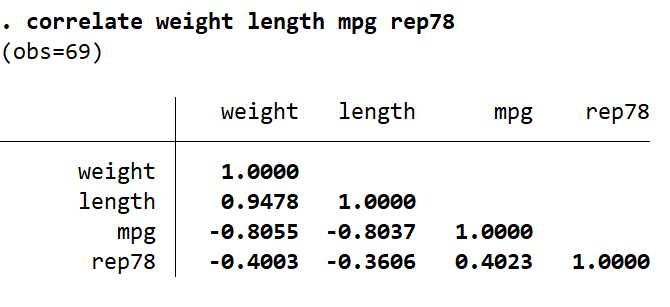

regress price mpg rep78 weight length

estat vif

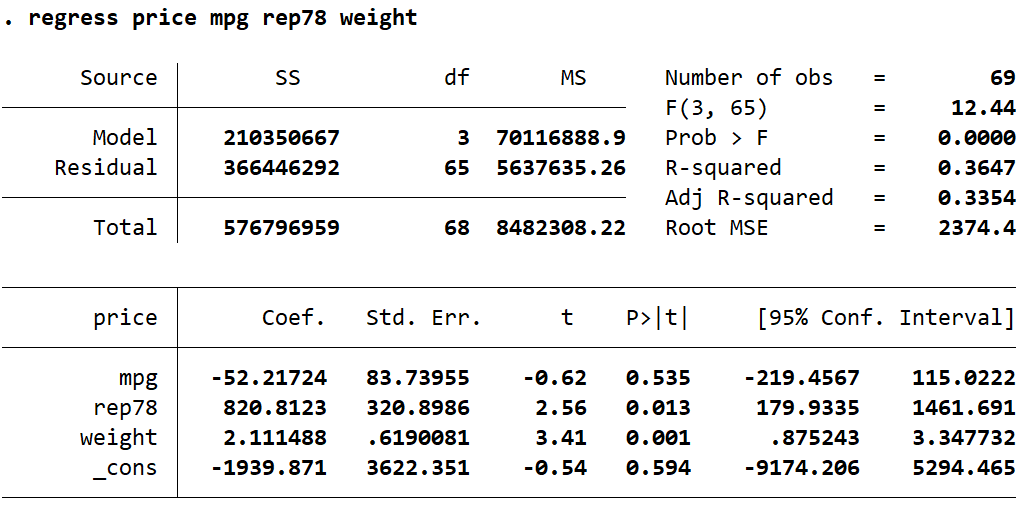

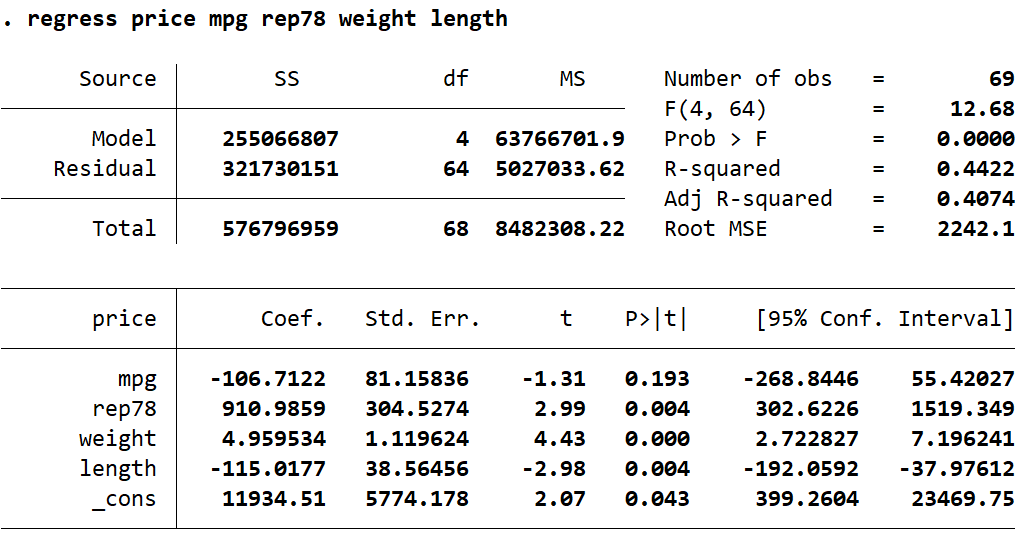

This gives the following output in Stata:

For this regression both weight and length have VIFs that are over our threshold of 10. We already know that weight and length are going to be highly correlated, but lets look at the correlation values anyway. In the command pane I type the following:

correlate weight length mpg rep78

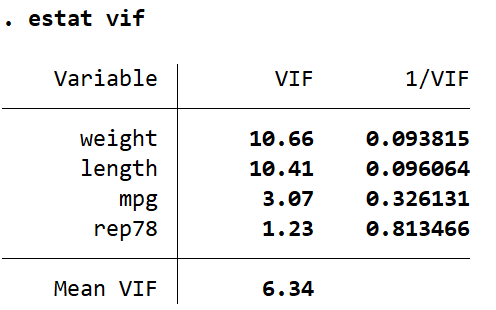

This generates the following correlation table:

As expected weight and length are highly positively correlated (0.9478). However, unlike in our previous example, weight and length are not measuring the same thing. I want to keep both variables in my regression model, but I also want to deal with the multicollinearity. To do this, I am going to create a new variable which will represent the weight (in pounds) per foot (12 inches) of length. In the command pane I type the following:

generate wbl = (weight/length) * 12

label variable wbl "Weight in pounds (lbs) per foot (12 inches) of length"

regress price mpg rep78 wbl

estat vif

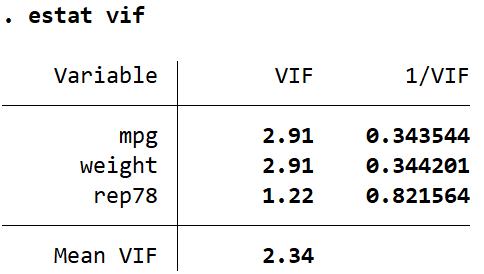

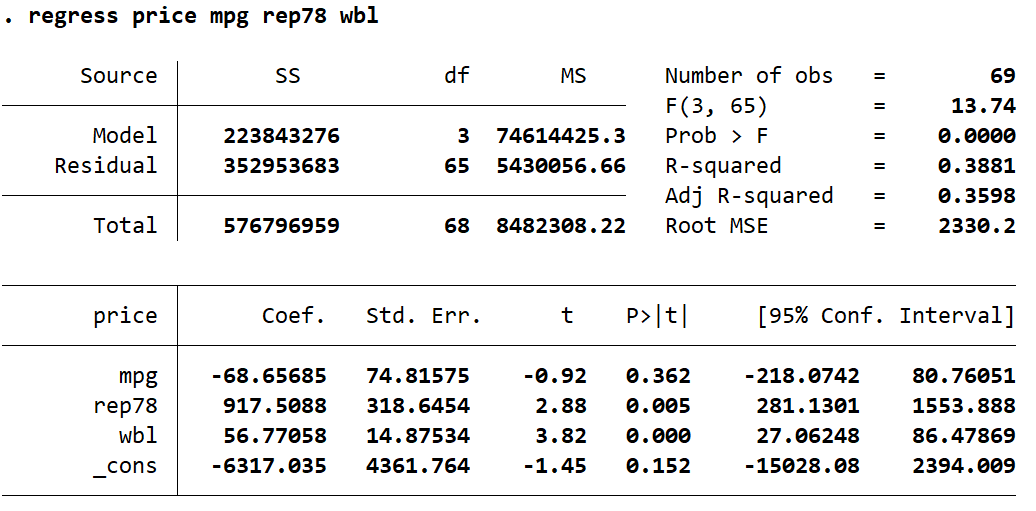

This gives the following output:

Here we see our VIFs are much improved, and are no longer violating our rules. By combining the two proportionally related variables into a single variable I have eliminated multicollinearity from this model, while still keeping the information from both variables in the model.

In this post I have given two examples of linear regressions containing multicollinearity. I used the estat vif command to generate variance inflation factors. I then used the correlate command to help identify which variables were highly correlated (and therefore likely to be collinear). Some knowledge of the relationships between my variables allowed me to deal with the multicollinearity appropriately.

I did not cover the use of the uncentered option that can be applied to estat vif. Generally if your regression has a constant you will not need this option. If you run a regression without a constant (e.g. using the noconstant option with the regress command) then you can only run estat vif with the uncentered option. You can also use uncentered to look for multicollinearity with the intercept of your model. However, you should be wary when using this on a regression that has a constant. There will be some multicollinearity present in a normal linear regression that is entirely structural, but the uncentered VIF values do not distinguish this. Therefore, your uncentered VIF values will appear considerably higher than would otherwise be considered normal.